On April 4, 2026, Anthropic pulled the plug on OAuth access for third-party tools. If you were using OpenClaw, NanoClaw, or any other framework that routed requests through your Claude Pro or Max subscription — it stopped working today. Your workflows are broken. Your agents are down.

I've been running a multi-agent orchestration system on Claude Code for over nine months. It wasn't affected. Not because I found a workaround or exploit, but because it never used OAuth in the first place. The system spawns official claude CLI processes and lets them handle their own authentication. That's it. No tokens, no gateway, no SDK calls.

This post isn't about selling you my tool. It's about a pattern — CLI subprocess orchestration — that is architecturally immune to the kind of policy change Anthropic just made. If you're looking for an OpenClaw alternative that won't break the next time a provider changes their auth rules, this is the approach worth understanding.

What Anthropic Actually Changed

Here's the policy, straight from Anthropic's updated terms:

"Using OAuth tokens obtained through Claude Free, Pro, or Max accounts in any other product, tool, or service — including the Agent SDK — is not permitted and constitutes a violation of the Consumer Terms of Service."

The enforcement timeline:

- January 2026 — Anthropic engineer Thariq Shihipar signaled on social media that enforcement would be tightened

- February–March 2026 — Server-side safeguards quietly blocked subscription OAuth tokens from working outside official Claude products

- February 20, 2026 — Anthropic updated documentation to make the ban explicit

- April 4, 2026 — Full enforcement. Pro/Max subscriptions only cover Claude Code, claude.ai, Claude Desktop, and Claude Cowork

Anthropic announced the enforcement alongside transition support: one-time credits and API prepurchase discounts for affected users.

The reason is straightforward: token arbitrage. A $200/month Max subscription was generating significant API-equivalent workloads through third-party tools — far exceeding what the subscription was priced for. OpenClaw users were getting enterprise-grade API access at consumer prices. Anthropic closed the gap.

The Claude Code legal docs now state plainly: "Anthropic does not permit third-party developers to offer Claude.ai login or to route requests through Free, Pro, or Max plan credentials on behalf of their users."

Why My System Wasn't Affected

VNX Orchestration is the multi-agent system I've been building and running in production since late 2025. It coordinates up to four Claude Code instances working in parallel — one orchestrator (T0) and three workers (T1, T2, T3) — with governance layers between them.

When the OAuth ban hit, nothing changed. Not a single line of code needed updating. Here's why:

VNX doesn't call Anthropic's API. It doesn't store OAuth tokens. It doesn't import the Anthropic SDK. The only thing it does is spawn official claude CLI processes — the same binary you run when you type claude in your terminal. The binary handles authentication internally. VNX never touches credentials.

This isn't a clever workaround. It's how the system was designed from day one. I never wanted to build a harness. I wanted to build an orchestration layer — something that governs what happens between agents, not something that pretends to be an API gateway.

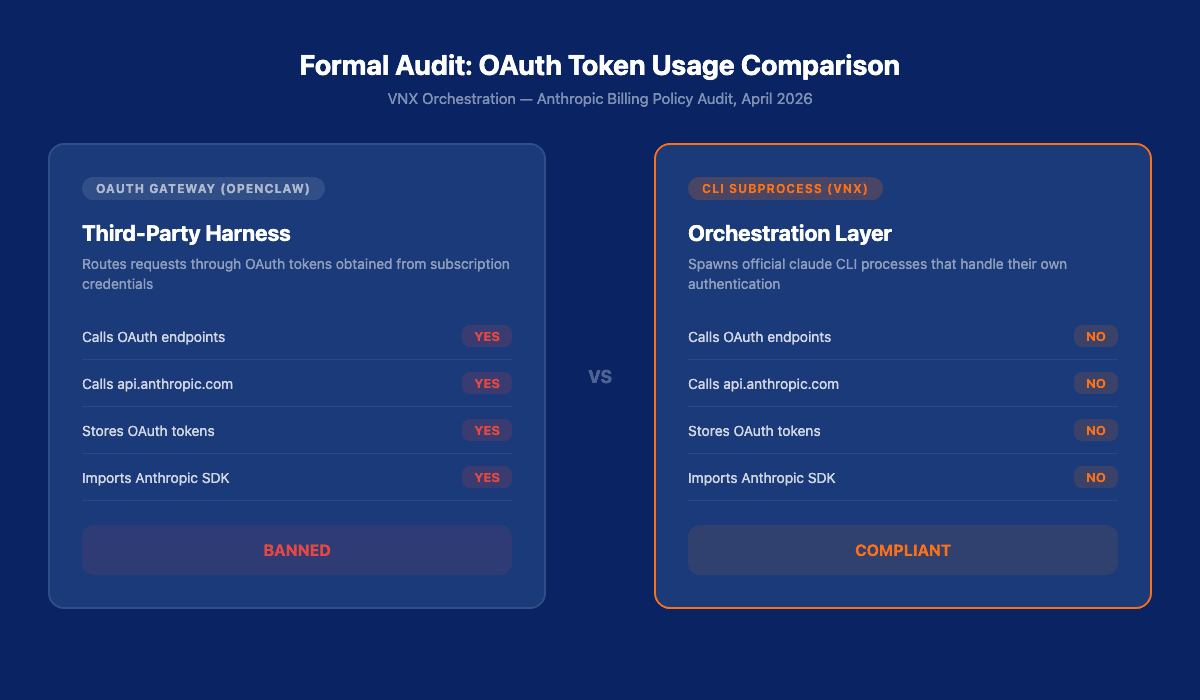

The Formal Audit

To be sure I wasn't accidentally in violation, I ran a formal audit of the entire VNX codebase. Four questions, definitive answers:

| Question | Result |

|---|---|

| Does any code call Anthropic's OAuth endpoints or use stored OAuth tokens? | NO |

| Does any code call api.anthropic.com using subscription credentials? | NO |

Does it only launch claude CLI processes that authenticate themselves? | YES |

| Are there HTTP clients targeting Anthropic endpoints in executable code? | NO |

The audit scanned every file in the repository. Here's what it found:

- Anthropic domain references — every hit was inert: documentation hyperlinks, comments, commit template strings. Zero in executable code.

- OAuth/Bearer tokens — 5 matches, all targeting Google Vertex AI (

gate_runner.py:207) and Perplexity (.mcp.json). Zero targeting any Anthropic domain. - HTTP clients — 7 matches:

urllib.requestcalls togoogleapis.comandlocalhost(Ollama),fetch()calls to local dashboard API. The singlecurlreference toapi.anthropic.comis in a documentation file, not executable code. - Anthropic SDK imports — zero. No

@anthropic-ai/sdk, noanthropicPython package anywhere in the codebase.

The only outbound API calls go to Google Vertex AI (for Gemini routing) and Perplexity (for research). Zero HTTP calls to any Anthropic-owned domain in executable code.

Here are the actual invocation patterns from the codebase:

Headless subprocess (scripts/lib/headless_adapter.py:59):

subprocess.run(

["claude", "--print", "--output-format", "text"],

input=prompt,

capture_output=True,

text=True

)Interactive terminal via tmux (scripts/commands/start.sh:687):

tmux send-keys -t "$T0" "claude --model $t0_model $t0_flags" C-mProvider command builder (scripts/lib/vnx_start_runtime.py:172):

return f"claude --model {tc.model}{extra}{skip_flag}"Every interaction with Claude goes through the official CLI binary. The binary authenticates itself. VNX is an orchestration layer on top, not a proxy in between. For the full technical breakdown of all four CLI invocation patterns — tmux, headless, one-shot, and provider builder — see part 3 of this series.

The full audit is available in the repository.

Read also: One Terminal to Rule Them All: How I Orchestrate Claude, Codex, and Gemini — The multi-provider architecture behind VNX's headless agent dispatch.

What VNX Actually Does

Let me be honest about what this system is — and isn't.

VNX is a multi-agent orchestration framework built on Glass Box Governance principles. The core idea: an orchestrator (T0, running Claude Opus) plans and distributes work to parallel workers (T1–T3), but every action goes through approval gates, deterministic quality checks, and an append-only audit trail.

I started building this nine months ago — before OpenClaw existed, before Anthropic shipped Agent Teams. Back then, tmux and bash scripts were the only tools available for multi-agent coordination. A lot of that legacy is still visible in the codebase. But the architecture has evolved significantly.

What's working today:

- Multi-agent coordination — T0 orchestrator + 3 parallel workers running in 4 tmux terminals. T0 doesn't just distribute — it monitors progress, adjusts dispatches mid-flight, and steers workers when they drift

- Headless agents across providers — not just Claude. I've built headless gates for Gemini CLI and Codex review too. Workers aren't locked to one model

- Smart dispatches with intelligence injection— T0 doesn't dispatch blind. An FTS5 database stores patterns, learnings, and prevention rules. Before every dispatch, relevant intelligence gets**injected into the worker's context**. The agent starts with the knowledge the system has accumulated

- Self-learning loops— patterns agents adopt successfully get boosted. Patterns they ignore decay. The system doesn't just execute — it**learns from its own behavior** and feeds that back into future dispatches

- Quality gates — deterministic checks (file size, test coverage, blocker counts) that validate output without burning LLM tokens. This unloads T0's cognition so it can focus on planning, not policing

- Receipt ledger — every dispatch, every output, every approval logged to an append-only NDJSON trail. When something breaks at 2 AM, I trace what happened in 30 seconds

- Full autonomous mode — queue popup for dispatch approval, then agents run without permission popups or dangerous-scope interruptions. I've completed multi-feature coding projects this way, including PR reviews

- Multi-feature chaining — orchestrated sequences where PR-0 feeds into PR-1, feeds into PR-2. Each with independent quality gates

- Open items tracking — unresolved issues persist across dispatches. Nothing falls through the cracks

- 87% token reduction via native skill architecture instead of template compilation

- Context rotation at 65% utilization — automatic handoff to fresh sessions without losing state

I built this as a solopreneur. It started as a hobby project that got out of hand — an experiment in whether I could make AI agents accountable instead of just autonomous. Nine months later, it's grown into something I rely on daily, first as a coding assistant and increasingly for business operations like content production and marketing.

What VNX Is NOT — The Honest Part

I'm not going to pretend this is a drop-in OpenClaw replacement. It isn't. Different paradigm, different problem space.

What VNX doesn't have:

- No messaging integration — no WhatsApp, Telegram, or Slack routing for end users. This is a developer's orchestration tool, not a personal assistant platform

- No 5,700-skill marketplace — VNX has focused skills, not a community registry

- No multi-tenant support — this is a single-operator system. One human, multiple agents

- No cross-platform portability (yet) — currently coupled to macOS + tmux

- ~20 GitHub stars, not 340K — let's be real about the maturity difference

What VNX needs help with:

- Installation is cumbersome. You need bash, Python 3, tmux, jq, and fswatch. I haven't prioritized DX because I've been the only user

- The tmux 3-window architecture is legacy — it's how I started nine months ago, and there's a lot of bash code that reflects that early design. It's fragile. Sessions can die and take in-flight work with them

- The architecture is evolving toward single-responsibility agents with dedicated folders instead of skill injection per terminal. Each agent gets its own workspace, its own context, its own rules — instead of sharing a generic terminal that could run anything

- The headless direction (moving from tmux to pure

claude -psubprocess) addresses the fragility. The dashboard control pane (features 22–26) will replace tmux entirely — kanban view of dispatches, real-time agent status, governance digest, session controls in the browser. But neither is complete yet

I built this alone. If it's going to grow into something the community can use, I need contributors. More on that at the end.

OpenClaw Alternative? Here's the Honest Comparison

| Aspect | OpenClaw | VNX Orchestration |

|---|---|---|

| Philosophy | Personal assistant, any OS | Multi-agent governance with human gates |

| Trust model | Agent autonomy | Glass-box — every action through dispatch + approval |

| Auth method | OAuth gateway (now banned) | CLI subprocess (unaffected) |

| Orchestration | Skill pipelines (Lobster) | Terminal tracks (T0–T3) with role separation |

| Audit trail | Not a primary concern | NDJSON receipt ledger with gate evidence |

| Headless agents | acpx | claude -p + Gemini CLI + Codex review |

| Skill ecosystem | 5,700+ on ClawHub | Focused native skills (growing) |

| Security record | 200+ CVEs, supply chain attacks on ClawHub | No gateway = smaller attack surface |

| GitHub stars | 340,000+ | ~20 |

| Maturity | 430K lines, 3 frontends | Working prototype, battle-tested for my use |

The convergence is real: both treat agents as composable units, both have skill registries, both support headless execution, both care about workflow orchestration. But the divergence is fundamental. OpenClaw trusts agents and focuses on routing. VNX constrains agents and focuses on governance.

Could VNX evolve toward OpenClaw's model? In specific ways — yes. A skill marketplace, platform portability, declarative pipeline DSL. But governance stays non-negotiable. That's the part I think OpenClaw got wrong from the start.

The CLI Subprocess Pattern: Anyone Can Adopt This

You don't need VNX to use this pattern. The principle is simple:

Spawn the official CLI binary. Let it handle auth. Keep your orchestration layer clean.

import subprocess

def ask_claude(prompt: str) -> str:

result = subprocess.run(

["claude", "--print", "--output-format", "text"],

input=prompt,

capture_output=True,

text=True

)

return result.stdoutThat's the entire integration. No SDK imports. No token management. No OAuth flows. The claude binary authenticates using your logged-in session. Your code never touches credentials.

This works for any CLI tool — Claude Code, Codex CLI, Gemini CLI. The pattern is the same: build onthe product, notaround it.

The key insight: when the platform changes its authentication rules — as Anthropic just did — your orchestration layer isn't affected. Because you never touched auth in the first place.

Read also: The Cascade of Doom: When AI Agents Hallucinate in Chains — Why governance matters: what happens when agents trust each other's output without validation.

Why So Many Tools Built on OAuth

OAuth was seductive. A $200/month Max subscription gave you predictable costs for unpredictable workloads. No per-token billing. No surprise invoices. For autonomous agents that might run hundreds of requests per day, that's massively cheaper than API pricing.

The problem: it was building on someone else's authentication layer. And authentication layers are the first thing platforms lock down when they want to control access.

If you'd built on CLI subprocess from day one, today's announcement changes nothing for you. Your agents keep running. Your workflows stay intact. The lesson isn't that Anthropic is wrong for closing the gap — it's that depending on auth you don't control is an architectural risk.

Compliance: What's Actually Allowed

This matters, so I'll be explicit.

Important:Claude Pro and Max subscriptions are for yourown business operations. Anthropic's terms specify "ordinary, individual usage" of Claude Code. Not for building commercial tools. Not for reselling access. Not for multi-tenant platforms.

I use VNX for my own work. It started as a coding assistant and has grown into my business operations tool — content production, marketing, project management. Everything runs under my own Max subscription, for my own work.

VNX does not:

- Share credentials with other users

- Proxy API access for third parties

- Operate as a multi-tenant service

- Generate revenue from Anthropic's auth

The CLI subprocess pattern respects this because the CLI binary enforces the user's own subscription. There's no way to share access through it — each user must be logged in on their own machine.

Could I get more observability by switching to the Anthropic API with direct API keys? Yes — structured event streaming, cooperative cancellation, real-time tool inspection. But the cost difference is real: $200/month Max subscription versus ~$3,000/month in equivalent API tokens for my workload. That's a 15x price difference for capabilities I can work around. The CLI subprocess pattern gives me full autonomous multi-agent orchestration at a fraction of the cost. What I trade is some observability and granular control — not core functionality.

What's Next

I'm sharing this for three reasons:

1. The pattern is more important than the tool. CLI subprocess orchestration works. If you adopt nothing from VNX except subprocess.run(["claude", ...]) and a quality gate between agent output and action, you'll be ahead of most frameworks.

2. Governance is the gap.OpenClaw has over 340K stars andover 200 CVEs in the National Vulnerability Database. Its skill marketplace has been hit by supply chain attacks. It had no audit trail, no quality gates, no human approval loops. The OAuth ban is a symptom. The root cause is that the trust model was wrong from the start.

3. I need help. This is a working prototype with ~20 stars built by one person. The architecture is sound — nine months of production use have validated that. But turning it into something the community can install and use requires more hands. I'm looking for contributors interested in governance-first AI orchestration, headless agent patterns, and dashboard development.

Start here:

- Repository: github.com/Vinix24/vnx-orchestration

- The subprocess patterns:

scripts/lib/headless_adapter.py - The orchestration entry point:

scripts/commands/start.sh - The formal audit:

docs/compliance/

If you're building AI agents and the OAuth ban just disrupted your workflow — the CLI subprocess pattern is how you avoid this class of problem entirely. Build on the product. Not around it.

Frequently Asked Questions

Vincent van Deth

AI Strategy & Architecture

I build production systems with AI — and I've spent the last six months figuring out what it actually takes to run them safely at scale.

My focus is AI Strategy & Architecture: designing multi-agent workflows, building governance infrastructure, and helping organisations move from AI experiments to auditable, production-grade systems. I'm the creator of VNX, an open-source governance layer for multi-agent AI that enforces human approval gates, append-only audit trails, and evidence-based task closure.

Based in the Netherlands. I write about what I build — including the failures.