The OAuth ban didn't kill OpenClaw. OpenClaw's own security record did that long before Anthropic pulled the plug.

On April 4, 2026, Anthropic enforced a policy that third-party tools can no longer use OAuth tokens from Pro/Max subscriptions. OpenClaw, with its 340,000+ GitHub stars, was the most visible casualty. But if you're looking for an OpenClaw alternative, the OAuth ban should be the least of your concerns.

The National Vulnerability Database lists over 200 CVEs for OpenClaw. Its skill marketplace was hit by supply chain attacks. Tens of thousands of instances were exposed to the internet with exploitable RCE vulnerabilities. The OAuth ban is a symptom. The architecture was the disease.

I've spent the last two months analyzing AI agent frameworks — not as a vendor picking fights, but as someone who builds multi-agent systems in production and needed to understand the landscape. This post is that analysis: what frameworks exist, how they handle authentication, which ones are affected by the ban, and which architectural patterns actually survive policy changes.

From Clawdbot to OAuth Ban: The Timeline

Understanding where OpenClaw went wrong requires understanding how fast it grew — and how little security kept pace.

| Date | Event |

|---|---|

| November 2025 | Peter Steinberger releases Clawdbot, a personal AI assistant |

| January 27, 2026 | Renamed to Moltbot after trademark concerns |

| January 30, 2026 | Renamed to OpenClaw. Growth explodes |

| ~90 days | Reaches 250,000+ GitHub stars — fastest-growing open source project in history |

| February 2026 | First CVEs published. ClawJacked (CVE-2026-25253) allows RCE via WebSocket hijacking |

| February 14, 2026 | Steinberger announces move to OpenAI. Project transferred to open-source foundation |

| February 2026 | ClawHavoc: supply chain attack hits ClawHub marketplace. Malicious skills distribute infostealers |

| February 2026 | Security researchers find 40,000+ internet-exposed instances, 63% vulnerable |

| January–March 2026 | Anthropic quietly deploys server-side blocks on subscription OAuth tokens |

| February 20, 2026 | Anthropic updates documentation: OAuth for third-party tools explicitly banned |

| April 4, 2026 | Full enforcement. Pro/Max subscriptions only cover Claude Code, claude.ai, Claude Desktop, and Claude Cowork |

The speed is the point. OpenClaw went from zero to 340K stars in three months. No framework — no codebase — survives that kind of growth without accumulating serious security debt. And OpenClaw's nearly 500,000 lines of code across multiple frontend frameworks made review practically impossible.

📖 Read also: Routing Is Not Orchestration: Why OpenClaw's Architecture Misses the Point — A deep dive into why OpenClaw's hub-and-spoke model fails at production governance.

The Security Record: Why Numbers Matter

Let's be specific about what "200+ CVEs" means in practice.

Critical vulnerabilities (documented):

| CVE | Severity | Type |

|---|---|---|

| CVE-2026-25253 | CVSS 8.8 | RCE via Cross-Site WebSocket Hijacking ("ClawJacked") |

| CVE-2026-24763 | High | Command Injection via Docker PATH |

| CVE-2026-25157 | High | OS Command Injection via SSH |

| CVE-2026-25593 | High | AI Assistant RCE |

| CVE-2026-27487 | High | RCE vector |

| CVE-2026-25475 | Varies | Authentication Bypass |

| CVE-2026-26319 | Varies | SSRF |

| CVE-2026-26322 | Varies | Path Traversal |

These are the most critical named ones. Dedicated trackers like openclaw-security-monitor document 80+ advisories, while the Argus Security audit identified 512 total vulnerabilities including 8 critical. The NVD returns over 200 results for OpenClaw-related entries — a number that keeps growing.

The ClawHavoc supply chain attack:

OpenClaw's skill marketplace (ClawHub) had no mandatory code review for submissions. Attackers exploited this to distribute malicious skills containing the Atomic macOS Stealer and Windows infostealers with remote access trojans. The attack was documented by multiple security firms including CrowdStrike and Cisco.

Exposure at scale:

Security researchers identified 40,000+ internet-exposed OpenClaw instances. Of those, 63% were vulnerable — with 12,812 directly exploitable via RCE. The Moltbook platform (a hosted version) leaked 35,000 email addresses and 1.5 million agent tokens.

This isn't a "move fast and break things" story. This is what happens when a personal assistant tool designed for localhost gets deployed as internet-facing infrastructure without the security architecture to support it — the same cascade failure pattern I've documented in multi-agent systems, but at infrastructure scale.

📖 Read also: The Cascade of Doom: When AI Agents Hallucinate in Chains — What happens when security failures compound across agents without governance checkpoints.

Why the OAuth Ban Was Architecturally Predictable

OpenClaw's architecture is what I call a harness model: it wraps around an LLM provider's API using the user's authentication credentials. The user logs in, OpenClaw captures the OAuth token, and routes all requests through that token.

This creates three structural problems:

1. Credential accumulation. Every API key, every OAuth token lives in OpenClaw's config files — often in plaintext in ~/.openclaw/. One compromised instance leaks everything.

2. Trust boundary collapse. The same process that routes your WhatsApp messages also holds your Claude credentials. There's no isolation between the messaging layer and the authentication layer.

3. Platform dependency. Your entire tool depends on a single provider's willingness to let you use their auth tokens. When that provider says no — as Anthropic just did — your tool is dead.

The CLI subprocess pattern avoids all three. No credentials stored. No trust boundary shared. No platform auth dependency. I wrote about this in detail in part 1 of this series.

The Framework Landscape: Twelve Alternatives Compared

If you're evaluating alternatives, you need to understand the landscape. I've analyzed twelve frameworks across four categories. For each one, I checked: how does it handle auth? Is it affected by the ban? Does it have governance features?

Category 1: AI Coding Assistants

These are the tools most OpenClaw users will migrate to for code-related workflows.

| Framework | Auth Model | Affected by Ban? | GitHub Stars | Governance |

|---|---|---|---|---|

| Cursor | Own API keys + subscription | No — uses own infra | Proprietary | None |

| Windsurf (Codeium) | Own API keys + subscription | No — uses own infra | Proprietary | None |

| Aider | Direct API keys (user provides) | No — API key model | ~30K | Git-based audit trail |

| Continue.dev | Direct API keys + local models | No — API key model | ~25K | None |

| Codex CLI (OpenAI) | OpenAI API key | No — different provider | ~20K | Sandboxed execution |

Key insight: None of these use Claude OAuth tokens. They either run their own infrastructure (Cursor, Windsurf) or require the user to provide API keys directly (Aider, Continue). The OAuth ban doesn't touch them — but they also don't offer multi-agent orchestration.

Category 2: Multi-Agent Frameworks

These are the frameworks that compete with OpenClaw's multi-agent capabilities.

| Framework | Auth Model | Affected by Ban? | GitHub Stars | Multi-Agent | Governance |

|---|---|---|---|---|---|

| CrewAI | Direct API keys | No | ~45K | Yes — role-based crews | Basic logging |

| AutoGen | Direct API keys | No | ~55K | Yes — conversation patterns | Session state |

| LangGraph | Direct API keys | No | ~25K | Yes — DAG state machines | Checkpoint-based |

| OpenHands | Direct API keys | No | ~65K | Yes — event-sourced | Docker isolation |

| OpenAI Agents SDK | OpenAI API key | No — different provider | ~19K | Yes — minimal primitives | Guardrails |

Key insight:Every serious multi-agent framework uses direct API keys — not OAuth tokens from consumer subscriptions. The OAuth arbitrage model was uniquely OpenClaw's approach. These frameworks were never at risk from the ban because they never depended on consumer auth. As I argued inWhy Architecture Beats Models, the framework you pick matters far less than the architectural decisions underneath it.

Category 3: Orchestration & Workflow Platforms

For users who need visual workflow orchestration or low-code options.

| Framework | Auth Model | Affected by Ban? | GitHub Stars | Key Feature |

|---|---|---|---|---|

| n8n + AI nodes | API keys per node | No | ~34K | 500+ SaaS integrations |

| Dify | API keys | No | ~60K | Visual LLM app builder |

| Agno (ex-Phidata) | API keys | No | ~19K | 10,000x faster agent instantiation |

Category 4: CLI Subprocess Orchestration

This is the pattern I use. It's the only approach that doesn't depend on any API key or auth token at all.

| Framework | Auth Model | Affected by Ban? | Stars | Governance |

|---|---|---|---|---|

| VNX Orchestration | CLI subprocess — zero auth handling | No — never used OAuth | ~20 | Quality gates, receipt ledger, human-in-the-loop |

I'm putting VNX in its own category because the auth model is fundamentally different. VNX doesn't manage any credentials. It spawns official CLI binaries (claude, codex, gemini) and lets them handle authentication internally. The orchestration layer is completely decoupled from the auth layer.

This is why the OAuth ban didn't affect it — and why no future auth change will either.

📖 Read also: Agent Teams vs. VNX Orchestration: Architectural Comparison — How VNX's terminal track model compares to Anthropic's own Agent Teams.

📖 Read also: Why Architecture Beats Models: Lessons from 2400+ AI Agent Dispatches — Why the framework you choose matters less than the architectural patterns underneath it.

Three Architectural Patterns That Survive

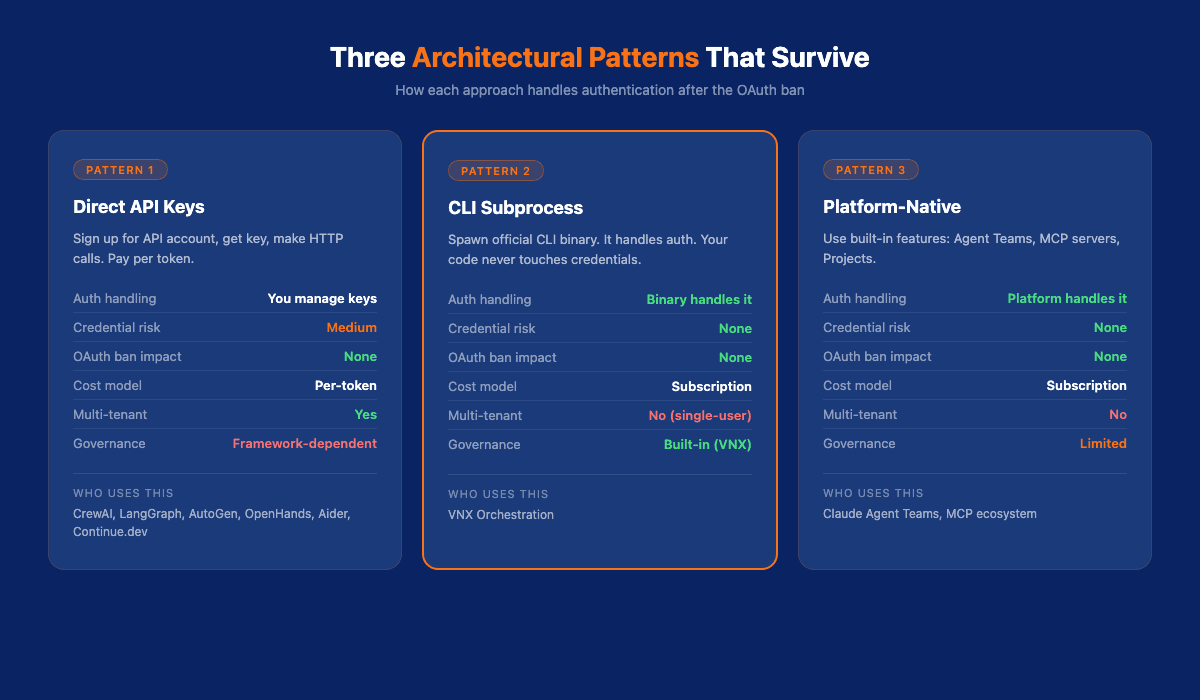

If you're rebuilding after the OAuth ban, you have three viable patterns. Each has tradeoffs.

Pattern 1: Direct API Keys

How it works: You sign up for an Anthropic API account, get an API key, and make direct HTTP calls to api.anthropic.com. You pay per token.

Who uses it: CrewAI, LangGraph, AutoGen, OpenHands, Aider, Continue.dev — almost every framework except OpenClaw.

Pros:

- Simple, well-documented, supported by every framework

- Pay for what you use

- Full control over your authentication

Cons:

- Costs scale with usage (no flat rate) — I documented the real cost of running AI agents in production and how multi-model routing cut my bill by 87%

- You manage API keys (rotation, secrets management)

- Per-token pricing can surprise you with autonomous agents

Best for: Teams with predictable workloads and proper secrets management.

Pattern 2: CLI Subprocess

How it works: You spawn the official claude CLI binary via subprocess.run() or equivalent. The binary handles authentication using the user's logged-in session. Your code never touches credentials.

Who uses it: VNX Orchestration. This is also what anyone building automation on top of Claude Code is doing, whether they know it or not.

Pros:

- Zero credential management — the binary handles it

- Immune to auth policy changes

- Works with your existing Pro/Max subscription (for your own use)

- Simplest integration: one function call

Cons:

- Coupled to the CLI binary's interface (which can change)

- No programmatic rate limiting (you get what the CLI gives you)

- Each subprocess invocation has startup overhead

- Single-user only — the CLI authenticates as you

Best for:Solo developers and small teams orchestrating their own AI workflows. I cover thefour production CLI patterns in detail — including a minimal orchestrator you can build in an afternoon.

Pattern 3: Platform-Native

How it works: You use Claude's built-in features — Agent Teams, MCP servers, Projects — instead of building external orchestration.

Pros:

- Officially supported, no compliance risk

- New features land here first

- No infrastructure to maintain

Cons:

- Limited to what Anthropic ships

- No custom governance layers

- Vendor lock-in

Best for: Teams that want zero operational overhead and can work within platform constraints.

Where VNX Fits — And Where It Doesn't

I'll be direct about this.

VNX Orchestration occupies a specific niche: governance-first multi-agent orchestration via CLI subprocess. It's not competing with CrewAI or LangGraph on breadth. It's not competing with Cursor or Aider on developer experience. It's doing something none of them do: treating every agent action as something that needs to be governed, audited, and approved.

What VNX offers that others don't:

- Quality gates — deterministic checks between agent output and action. No LLM guessing whether code is ready to merge

- Multi-terminal role isolation — the orchestrator (T0) never writes code. Workers (T1–T3) never make architectural decisions. Separation of concerns at the agent level

- Context rotation at scale — automatic session refresh when context quality degrades, keeping agents honest across long-running workflows

- Append-only receipt ledger — every dispatch, every output, every approval traced in NDJSON. When something breaks, you know exactly what happened

- Multi-provider headless agents — not just Claude. Gemini CLI, Codex review gates. Best model per task

- Self-learning intelligence injection — patterns from successful dispatches feed back into future ones via FTS5 database

- Formal compliance audit — line-by-line proof: zero OAuth tokens, zero API calls to Anthropic, zero SDK imports. Every HTTP call, every auth token, every domain reference documented

What VNX lacks:

- Scale: ~20 GitHub stars. One developer. Working prototype, not production framework

- DX: Installation requires bash, Python, tmux, jq, fswatch. No one-click setup

- Portability: macOS + tmux only. The dashboard replacement (features 22–26) will fix this, but it's not done

- Ecosystem: No marketplace, no plugins, no community integrations

The honest comparison: if you need a polished, well-documented multi-agent framework today, use CrewAI or LangGraph with direct API keys. They have thousands of users, active communities, and production-tested APIs.

If you care about governance — about knowing what your agents did, why they did it, and whether a human approved it — that's where VNX is the only option I've found. And the CLI subprocess pattern at its core is something anyone can adopt in 10 lines of Python.

What I'd Build Starting Today

If I were starting fresh after the OAuth ban, here's what I'd do:

1. Pick your auth model first. Not your framework — your auth model. Direct API keys for multi-tenant. CLI subprocess for personal orchestration. Platform-native if you don't need custom governance.

2. Start with the simplest framework that fits. For most developers, that's Aider (single-agent coding), CrewAI (multi-agent), or LangGraph (complex workflows). Don't over-engineer. Anthropic themselves say: "The most successful implementations weren't using complex frameworks or specialized libraries."

3. Add governance incrementally.You don't need a fulltraceability architecture on day one. Start with a quality gate — one deterministic check between agent output and action. A file size check. A test runner. Something that catches obvious failures without burning LLM tokens. Then add receipt logging. Then approval flows. Build up, don't start complex.

4. Never build on auth you don't control. This is the lesson from the OAuth ban. If your orchestration layer depends on someone else's authentication system, you have a single point of failure that you can't fix. CLI subprocess and API keys put auth in your hands. OAuth gateways put it in someone else's.

5. Assume your framework will die. OpenClaw is the fastest-growing open source project in history and it just got its legs cut off. Keep your business logic separate from your framework. Make it portable. The framework is the plumbing — your governance, your quality gates, your audit trail should survive a framework swap.

Compliance Reminder

Important:Claude Pro and Max subscriptions are for yourown business operations. Not for building commercial tools. Not for reselling access. Anthropic's terms are clear. I use VNX for my own work under my own subscription. The formal audit confirms zero OAuth usage — but the auth model only works for single-operator use.

If you need multi-user access to Claude, use the API with your own keys and your own billing.

Frequently Asked Questions

Vincent van Deth

AI Strategy & Architecture

I build production systems with AI — and I've spent the last six months figuring out what it actually takes to run them safely at scale.

My focus is AI Strategy & Architecture: designing multi-agent workflows, building governance infrastructure, and helping organisations move from AI experiments to auditable, production-grade systems. I'm the creator of VNX, an open-source governance layer for multi-agent AI that enforces human approval gates, append-only audit trails, and evidence-based task closure.

Based in the Netherlands. I write about what I build — including the failures.