Most AI systems can tell you what happened. Mine can tell you why.

That distinction sounds subtle. It isn't. When your content agent produces output at 3 AM and a client sees something wrong at 9 AM, "what happened" gives you a log entry. "Why" gives you the dispatch ID, the input context, the governance rules that were active, the quality score, and the operator who approved it.

That's the difference between logging and traceability. And it's not a feature — it's an architectural decision that shapes every layer of your system.

Logging Is Not Traceability

Every production system has logs. Application logs, access logs, error logs. They tell you events occurred. What they don't tell you:

- Why that specific decision was made

- What inputs led to that output

- Which rules governed the process

- Who approved the result

- What quality threshold was applied

Logs are event-centric. Traceability is decision-centric. A log entry says "agent produced output at 14:32:07." A traceability receipt says "dispatch D-4821 was assigned to terminal T2, processed with governance rules G-L6 and G-L7, scored 0.87 on composite quality, and approved by operator V at 14:34:12."

That receipt is not a log. It's a forensic artifact.

Why Traceability Must Be Architecture, Not Afterthought

The EU AI Act (Article 12) requires high-risk AI systems to maintain automatic logging capabilities that enable full traceability of the system's operation from deployment through decommissioning. This isn't optional compliance — it's law, effective August 2026.

But regulatory pressure isn't why I built traceability into VNX Orchestration from day one. I built it because systems without traceability are systems you can't debug.

When you're running four agent terminals, each handling 15-20 dispatches per day, each dispatch involving multiple governance checks — you generate complexity faster than human cognition can follow. Without end-to-end traceability, you're debugging by guesswork.

I've written about choosing NDJSON over Postgres for the receipt ledger. That was a storage decision. This article is about the architectural patterns that make traceability possible in the first place.

The Receipt Pattern

Every dispatch in VNX Orchestration generates a receipt. Not a log entry — a receipt. The distinction matters:

{

"receipt_id": "R-2026-04-10-0847",

"dispatch_id": "D-4821",

"terminal": "T2",

"agent": "content-writer",

"timestamp": "2026-04-10T14:32:07Z",

"input_context": {

"task_type": "blog_draft",

"source": "content_calendar",

"governance_rules": ["G-L6", "G-L7"],

"prevention_rules_active": 3

},

"output": {

"artifact": "blog/2026-04-10-traceability/index.md",

"word_count": 2341,

"quality_score": 0.87

},

"approval": {

"status": "approved",

"approver": "operator-v",

"timestamp": "2026-04-10T14:34:12Z"

},

"cost": {

"input_tokens": 24580,

"output_tokens": 8921,

"model": "claude-sonnet-4-5-20250514",

"cost_usd": 0.31

}

}This receipt is append-only (governance rule G-L6). Once written, it cannot be modified or deleted. Every receipt links to a dispatch ID, which links to an input context, which links to the governance rules active at the time.

That chain — dispatch → context → rules → output → quality → approval — is traceability. And it's built into the architecture, not bolted on after the fact.

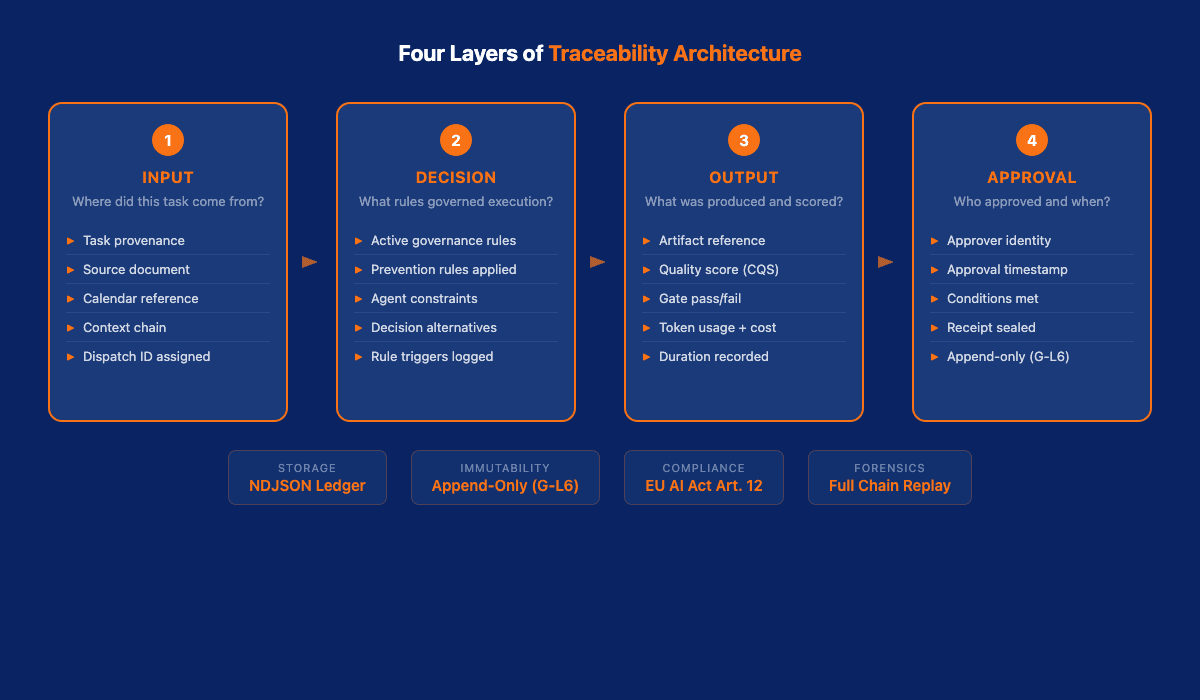

Four Layers of Traceability

Traceability in a multi-agent system operates at four distinct layers. Miss one and you have gaps.

Layer 1: Input Traceability

Where did this task come from? Who or what created it? What context was available?

In VNX, every dispatch carries its provenance. A blog task traces back to the content calendar entry, which traces back to the content strategy document, which traces back to the keyword research. If a dispatch produces bad output, I can trace the chain backward to find where the instructions went wrong.

This sounds obvious. Most agent frameworks skip it entirely. They accept a prompt and execute. The prompt's origin? Not recorded.

Layer 2: Decision Traceability

What decisions did the agent make during execution? Which governance rules constrained those decisions?

This is where traceability diverges most sharply from logging. A log records what happened. Decision traceability records what could have happened and why one path was chosen over another.

In VNX, governance rules are code-enforced constraints (Glass Box Governance). Rule G-L7 prevents injection attacks by auditing every input. Rule G-L6 enforces append-only receipts. When a dispatch runs, the receipt records which rules were active and whether any were triggered.

Layer 3: Output Traceability

What was produced? What quality score did it receive? Did it pass the quality gate?

Every output in VNX goes through an independent quality assessment before it reaches the operator. The quality score, the assessment criteria, and the pass/fail decision are all part of the receipt. If output quality degrades over time, the receipts show exactly when and why.

Layer 4: Approval Traceability

Who approved this output? When? Under what conditions?

This layer closes the loop. In a human-on-the-loop system, the operator approves outputs based on quality scores and governance status. That approval is itself a traceable event — timestamped, attributed, and linked to the specific receipt.

Traceability vs. Observability

Observability tells you the system's current state. Traceability tells you how it got there.

In practice, you need both. The VNX Intelligence System provides observability — monitoring dispatches in real-time, detecting anomalies, surfacing patterns. But when something goes wrong, observability tells you "terminal T3 is producing below-threshold output." Traceability tells you "terminal T3 started degrading at dispatch D-4790 because context rotation was delayed by 12 dispatches, causing the model to hallucinate references from earlier tasks."

That level of forensic capability is only possible when traceability is architectural. You can't reconstruct decision chains from application logs.

The Regulatory Case

The EU AI Act's Article 12 requires "automatic logging of events" for high-risk AI systems. But the regulation's intent goes beyond logging — it requires traceability sufficient for:

- Post-market monitoring (Article 72) — tracking system behavior over time

- Serious incident reporting (Article 73) — explaining what went wrong and why

- Human oversight (Article 14) — enabling operators to understand and intervene

A MarkTechPost analysis of transparent AI agent architectures describes hash-chained SQLite ledgers that cryptographically link each event to its predecessor, making tampering detectable. That's one approach. VNX uses append-only NDJSON with file-system-level immutability — simpler, harder to break, easier to inspect with standard Unix tools.

The point isn't the specific technology. The point is that compliance-grade traceability requires architectural commitment, not a logging library.

Common Anti-Patterns in Traceability

Before looking at what traceability looks like when it works, it's worth examining how teams get it wrong. I've seen these patterns in client assessments and open source projects alike.

Anti-Pattern 1: Logging everything, tracing nothing. Teams generate gigabytes of logs — every API call, every model response, every token count — and call it traceability. It isn't. Volume is not traceability. If you can't reconstruct the decision chain for a specific output within five minutes, you don't have traceability. You have a storage bill.

Anti-Pattern 2: Traceability as a separate service. Some teams build traceability as a sidecar — a separate service that listens to events and reconstructs chains after the fact. This works until it doesn't. Network partitions, event ordering issues, and schema drift between the main system and the tracing service create gaps. Traceability needs to be in the execution path, not observing from the side.

Anti-Pattern 3: Mutable audit trails. If your traceability records live in a SQL database with UPDATE permissions, you don't have an audit trail. You have a suggestion. Append-only storage isn't a nice-to-have — it's a requirement. The moment someone can modify a receipt, the entire chain of trust breaks. This is why G-L6 (append-only receipts) exists as a non-negotiable governance rule in VNX.

Anti-Pattern 4: Tracing outputs but not inputs. Many systems record what was produced but not what went in. When an agent generates a bad blog draft, knowing it scored 0.45 doesn't help. Knowing that the input context was missing the style document, or that the source material was from an outdated strategy doc — that's what lets you fix the problem at the root.

Anti-Pattern 5: No correlation IDs across system boundaries. In multi-agent systems, a single business operation spans multiple dispatches, multiple terminals, and multiple quality checks. Without a correlation ID that links all of these together, you end up with individual receipts that tell isolated stories. The dispatch ID in VNX serves this purpose — every event in a chain references the original dispatch.

What This Looks Like in Practice

Three weeks ago, a content dispatch produced a blog draft that referenced a governance rule that doesn't exist. The quality gate caught it — score 0.62, below the 0.75 threshold.

Without traceability, I'd have a failed dispatch and a vague error. With traceability, I had:

- Receipt R-2026-03-21-1204 — shows the dispatch to terminal T2 with input context referencing the governance documentation

- Governance check — shows G-L7 (injection audit) passed, but the input context contained a stale reference to "G-L9" which doesn't exist in the current ruleset

- Root cause — the content calendar entry referenced documentation from before the governance rules were renumbered

- Fix — updated the content calendar source, re-dispatched, receipt R-2026-03-21-1247 shows quality score 0.91

Total debugging time: 8 minutes. Without traceability: potentially hours of guessing.

A Second Incident: The Silent Quality Degradation

A more subtle example happened in early March. The intelligence terminal (T3) had been producing daily market summaries without any quality gate failures for two weeks. Everything looked fine from the outside — scores above threshold, no escalations, no errors.

But the receipt chain told a different story. When I reviewed receipts R-2026-03-03 through R-2026-03-14, I noticed a pattern: the input context size had been growing steadily. The intelligence agent was accumulating context from previous runs without pruning, causing each subsequent summary to carry stale data from earlier analyses.

The quality gate didn't catch it because the summaries were technically well-written. The problem was upstream — in the input, not the output. Without input traceability (Layer 1), this drift would have continued indefinitely. The receipts let me trace the context growth back to a missing cleanup step in the dispatch configuration. A one-line fix to the dispatch template resolved it.

Building Traceability In

If you're designing a multi-agent system, here's what traceability-as-architecture means in practice:

1. Every dispatch gets a unique ID. Not a UUID — a human-readable, chronologically sortable identifier. D-2026-04-10-0847 tells me more than a3f8c2d1-... ever will.

2. Every receipt is append-only. Once written, a receipt cannot be modified. If a correction is needed, write a new receipt referencing the original. This is governance rule G-L6 in VNX, and it's non-negotiable.

3. Every decision records its constraints. Which governance rules were active? Which prevention rules applied? What quality threshold was set? Record the rules, not just the outcome.

4. Every output links to its input. The output artifact references the dispatch that created it. The dispatch references the input context. The context references the source. Break this chain and you lose traceability.

5. Every approval is an event. Human approval isn't a boolean flag on a database record. It's a timestamped event in the receipt ledger with an attributed approver.

6. Build forensic queries from day one. Traceability is only useful if you can query it. I maintain a small set of standard queries: "show me all dispatches to T2 in the last week with quality scores below 0.80," "show me the input context growth for T3 over time," "show me all escalations and their root causes." These queries are shell scripts that parse the NDJSON receipt ledger with standard Unix tools — grep, jq, sort. No special infrastructure needed.

7. Test your traceability chain regularly. Once a month, I pick a random output and trace it backward through the entire chain: output to receipt, receipt to dispatch, dispatch to input context, input context to source document. If any link is broken — a missing dispatch ID, a receipt without a governance section, an input without provenance — I fix the gap before it compounds.

Traceability Is Not Optional

The systems being built today — multi-agent workflows, autonomous pipelines, AI-powered business processes — are too complex to debug without traceability. And too consequential to run without accountability.

Logging tells you something happened. Traceability tells you why, how, and who approved it. One is a debugging tool. The other is an architectural foundation.

Every decision deserves a receipt. Build your systems accordingly.

Read also: Why I Chose NDJSON Over Postgres for My AI Agent Audit Trail — the storage layer underneath the traceability architecture described in this article.

Read also: Glass Box Governance: Making Multi-Agent AI Systems Transparent by Default — the governance framework that makes traceability enforceable.

Sources: EU AI Act Article 12, MarkTechPost: Transparent AI Agents with Audit Trails, Insoftex: AI Architecture 2026

📚 Glass Box Governance series

- One Terminal to Rule Them All: How I Orchestrate Claude, Codex, and Gemini Without Them Knowing About Each Other

- Receipts, Not Chat Logs: What 2,472 AI Agent Dispatches Taught Me About Governance

- The Cascade of Doom: When AI Agents Hallucinate in Chains

- Why I Chose NDJSON Over Postgres for My AI Agent Audit Trail

- Claude Agent Teams vs. Building Your Own: What Anthropic Solved (And What They Left Out)

- The External Watcher Pattern: How I Observe AI Agents Without Trusting Their Self-Reports

- Why Architecture Beats Models: Lessons from 2400+ AI Agent Dispatches

- Async Quality Gates: Why AI Agents Don't Get to Decide When They're Done

- From Human-in-the-Loop to Human-on-the-Loop: A Production Graduation Path ← you are here

- Traceability as Architecture: Designing AI Systems Where Every Decision Has a Receipt ← you are here

- Decision-Making Architecture: Why Autonomous Agents Need Governance, Not Just Instructions

- Context Rotation at Scale: How VNX Keeps AI Agents Honest After 10,000 Dispatches

- Autonomous Agent Patterns: 5 Production-Tested Approaches for Agents That Run Without You

- Governance Scoring: How to Measure Whether Your AI Agent Deserves More Autonomy

Vincent van Deth

AI Strategy & Architecture

I build production systems with AI — and I've spent the last six months figuring out what it actually takes to run them safely at scale.

My focus is AI Strategy & Architecture: designing multi-agent workflows, building governance infrastructure, and helping organisations move from AI experiments to auditable, production-grade systems. I'm the creator of VNX, an open-source governance layer for multi-agent AI that enforces human approval gates, append-only audit trails, and evidence-based task closure.

Based in the Netherlands. I write about what I build — including the failures.