I Almost Built This on Postgres. Here's Why I Didn't.

In October 2025, when I started scaling the VNX Glass Box Governance system across four AI agent terminals, I did what every engineer does: I reached for a database. PostgreSQL specifically. It's what I know. It's what the industry uses. It has ACID guarantees, concurrent writers, proper backup tooling—everything you're supposed to want.

Then at 2 AM, running dispatch #847, something broke silently in the audit trail. A transaction rolled back. The query that should have recorded the failure never executed. I didn't find out until 36 hours later when I was debugging why a cost anomaly report showed $0 spent on that entire dispatch batch.

That's when I realized: the audit trail for multi-agent AI systems isn't a database problem. It's a flight recorder problem.

Flight recorders on aircraft don't need ACID transactions. They don't need concurrent writers or foreign key constraints. They need one thing: when the system is falling apart, the record must be saved. Atomically. Appended. Never rolled back.

I switched to NDJSON that week. Over the next 1,625 dispatches, I've never lost a record.

The Problem: Why Databases Fail for Governance

When you're orchestrating four AI agent terminals—each running 3-6 concurrent tasks, each task spawning subtasks, each subtask generating actions—you're generating audit events faster than you might think. Completion receipts. Failure receipts. Quality advisories. Cost allocations. State mutations.

A traditional database approach looks reasonable:

CREATE TABLE dispatch_receipts (

id uuid PRIMARY KEY,

dispatch_id uuid NOT NULL,

terminal_id varchar NOT NULL,

action varchar NOT NULL,

timestamp timestamptz NOT NULL,

data jsonb NOT NULL,

created_at timestamptz DEFAULT NOW()

);

CREATE INDEX idx_dispatch_terminal ON dispatch_receipts(dispatch_id, terminal_id);This works fine at 10 dispatches per day. It works at 100. But at 2,472 dispatches—some completing in seconds, others running for hours, all generating multiple receipt events—you hit problems:

- Write contention: Four terminals writing to the same table simultaneously causes lock timeouts.

- Transaction overhead: Every receipt becomes a prepared statement + transaction commit. That's 50ms per event. Multiply by 20+ events per dispatch. You're suddenly waiting on I/O.

- Silent failures: A transaction fails, rolls back, and your application doesn't know the audit event disappeared. You discover it later when reconciling.

- Restoration complexity: If the database goes down mid-dispatch, which state is truth? The database state before the crash? The running dispatch that thinks it completed? The receipts that were only partially written?

PostgreSQL is powerful for many things. It's terrible for immutable append-only logs, especially in distributed systems where you need guarantees that the record will survive, always, even when your system is failing.

Why NDJSON Specifically

NDJSON stands for "Newline Delimited JSON"—and yes, it's as simple as it sounds. One valid JSON object per line, separated by newlines. Each line is independently parseable.

{"dispatch_id":"d-001","terminal":"T0","action":"dispatch_created","timestamp":"2026-02-27T09:15:32Z"}

{"dispatch_id":"d-001","terminal":"T0","action":"track_assigned","timestamp":"2026-02-27T09:15:33Z"}

{"dispatch_id":"d-001","terminal":"T1","action":"task_started","timestamp":"2026-02-27T09:15:35Z"}Why did I choose this over other formats? Five reasons:

1. Crash-Resilient Partial Writes

In NDJSON, if your write process crashes halfway through an event, only the incomplete line is corrupted. The previous 847 events are perfectly valid.

# Corrupt line at EOF—but everything before it is safe

$ tail -1 receipts.ndjson

{"dispatch_id":"d-002","terminal":"T2","action":"task_fa

# You just delete that line and carry on

$ head -n -1 receipts.ndjson > receipts.clean && mv receipts.clean receipts.ndjsonIn a database, a partial write might corrupt the entire WAL segment, lock the table, or leave the schema in an inconsistent state.

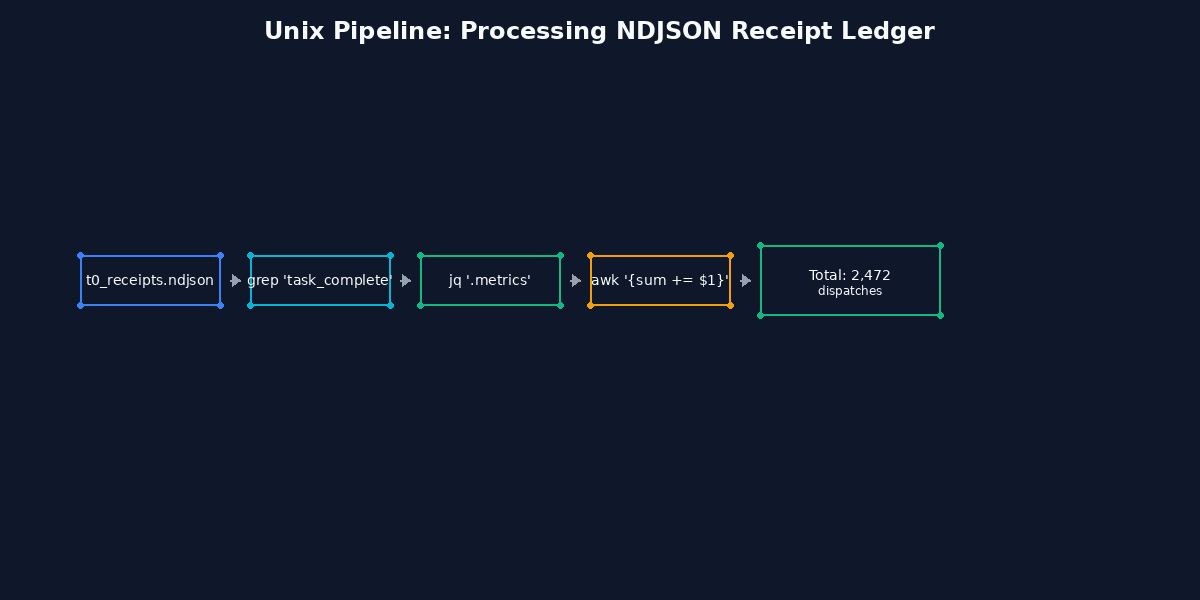

2. Streamable (Unix Philosophy)

NDJSON works with Unix tools designed in the 1970s. Tail. Grep. Awk. Jq. These tools were built for sequential log processing and they're incredibly efficient.

# Real-time tail of a specific terminal

$ tail -f receipts.ndjson | jq 'select(.terminal=="T1")'

# Cost analysis across all dispatches

$ cat receipts.ndjson | jq -s 'group_by(.dispatch_id) | map({

dispatch: .[0].dispatch_id,

total_cost: map(.cost_usd // 0) | add

})'

# Find all failures with latency > 5s

$ cat receipts.ndjson | jq 'select(.action=="completion" and .duration_ms > 5000 and .status=="failed")'Try doing that efficiently on Postgres with 2,472 dispatches. You'll write complex SQL. NDJSON gives you powerful queries with pipes and filters.

3. No Server, No Migrations

NDJSON is a file. That's it. No server process. No connection pool. No migration scripts. No schema versioning headaches.

At 4 AM when something's broken, I don't troubleshoot a database. I SSH to the server, run tail receipts.ndjson, and I'm looking at exactly what happened. No query parsing. No index lookup. Just sequential events.

4. Human-Readable by Default

Every receipt is valid JSON. I can read it. I can parse it with any language. I can grep for patterns. I can pipe it to visualization tools. I can email a line to someone and they instantly understand the structure.

A database dump is a pile of binary or text that requires loading it back into a database to make sense of.

5. Append-Only Semantics Built-In

NDJSON enforces append-only. You can't UPDATE a receipt. You can't DELETE a receipt. If something was wrong, you append a correction receipt that supersedes the previous one. This forces a cultural shift toward immutability—which is exactly what you want for governance.

{"dispatch_id":"d-003","receipt_id":"r-1","action":"cost_calculated","cost_usd":12.34,"timestamp":"2026-02-27T10:00:00Z"}

{"dispatch_id":"d-003","receipt_id":"r-2","action":"cost_corrected","receipt_id_superseded":"r-1","cost_usd":11.87,"reason":"api_call_recount","timestamp":"2026-02-27T10:05:00Z"}The Actual Receipt Schema

Here's what a real VNX receipt looks like. I'm showing you the actual structure from .vnx-data/receipts.ndjson:

Dispatch Created Receipt

{

"receipt_id": "rcpt-202602270915-d001-created",

"dispatch_id": "d-001-2026-02-27-governance-pass",

"type": "dispatch_created",

"timestamp": "2026-02-27T09:15:32.847Z",

"terminal_id": "T0",

"dispatch_name": "implement-external-watcher",

"status": "created",

"tracks_assigned": ["T1", "T2", "T3"],

"priority": "high",

"estimated_duration_minutes": 180

}Task Started Receipt

{

"receipt_id": "rcpt-202602270915-t001-started",

"dispatch_id": "d-001-2026-02-27-governance-pass",

"type": "task_started",

"timestamp": "2026-02-27T09:15:35.201Z",

"terminal_id": "T1",

"task_id": "task-001-components",

"action": "implement-shadcn-dropdown",

"branch": "feat/external-watcher-component",

"claude_model": "claude-opus-4-6"

}Completion Receipt

{

"receipt_id": "rcpt-202602270915-t001-completed",

"dispatch_id": "d-001-2026-02-27-governance-pass",

"type": "completion",

"timestamp": "2026-02-27T10:28:14.504Z",

"terminal_id": "T1",

"task_id": "task-001-components",

"status": "success",

"duration_ms": 4139000,

"commit_hash": "a7f3e9c2",

"lines_changed": 247,

"files_changed": 3,

"cost_usd": 3.24,

"tokens_used": {

"input": 45821,

"output": 12340

}

}Failure Receipt

{

"receipt_id": "rcpt-202602270915-t002-failed",

"dispatch_id": "d-001-2026-02-27-governance-pass",

"type": "failure",

"timestamp": "2026-02-27T11:52:19.738Z",

"terminal_id": "T2",

"task_id": "task-002-tests",

"status": "failed",

"duration_ms": 843000,

"error_code": "COVERAGE_THRESHOLD_UNMET",

"error_message": "Test coverage dropped below 82% threshold",

"error_summary": "external-watcher.test.ts coverage 78.4%",

"retry_allowed": true,

"retry_count": 0

}Quality Advisory Receipt

{

"receipt_id": "rcpt-202602270915-qgate-advisory",

"dispatch_id": "d-001-2026-02-27-governance-pass",

"type": "quality_advisory",

"timestamp": "2026-02-27T12:15:44.921Z",

"terminal_id": "T3",

"gate_name": "performance_check",

"severity": "warning",

"message": "Component bundle size increased 4.2%",

"current_bundle_kb": 156.8,

"previous_bundle_kb": 150.4,

"acceptable_increase_percent": 5.0,

"recommendation": "Consider lazy-loading WatcherUI subcomponents"

}Each receipt is completely independent. You can parse them in any order. You can tail the file in real-time. You can grep for specific dispatch IDs. You can process them with jq in a pipeline.

How to Query the Ledger with Unix Tools

This is where the magic happens. Because receipts are NDJSON, you have the entire Unix toolkit at your fingertips.

Find all tasks for a specific dispatch

$ cat .vnx-data/receipts.ndjson | jq 'select(.dispatch_id=="d-001-2026-02-27-governance-pass")'Calculate total cost by terminal

$ cat .vnx-data/receipts.ndjson | \

jq -s 'group_by(.terminal_id) | map({

terminal: .[0].terminal_id,

total_cost: map(.cost_usd // 0) | add,

task_count: map(select(.type=="completion")) | length

})'Find slowest tasks across all dispatches

$ cat .vnx-data/receipts.ndjson | \

jq 'select(.type=="completion") | {task_id, duration_ms, cost_usd}' | \

jq -s 'sort_by(-.duration_ms) | .[0:10]'Real-time monitoring of terminal T1

$ tail -f .vnx-data/receipts.ndjson | jq 'select(.terminal_id=="T1")'Check for anomalies (tasks under 30 seconds that cost > $5)

$ cat .vnx-data/receipts.ndjson | jq 'select(.type=="completion" and .duration_ms < 30000 and .cost_usd > 5)'None of these require loading data into a query engine. They execute instantly on a file with 2,472 dispatch events.

The Three Things NDJSON Can't Do (And How VNX Solves Them)

NDJSON is phenomenal for immutable audit trails, but it has blind spots. Let me be honest about them:

1. No Random Access

You can't efficiently query "give me receipt #847 without reading the first 846." For a 2MB NDJSON file with 2,472 receipts, this isn't a problem. For a 10GB file with millions of receipts, sequential scans become slow.

Solution: Every hour, VNX materializes the current receipt state into a JSON snapshot at .vnx-data/state/receipts-index-{timestamp}.json with a simple hash map:

{

"dispatch_index": {

"d-001-2026-02-27-governance-pass": {

"receipt_ids": ["rcpt-1", "rcpt-2", "rcpt-3"],

"status": "completed",

"total_cost": 45.67

}

},

"terminal_timeline": {

"T0": ["d-001", "d-002", "d-003"],

"T1": ["d-001", "d-004", "d-007"]

},

"generated_at": "2026-02-27T13:00:00Z"

}This gives you O(1) lookups for recent data, while the NDJSON file stays the immutable source of truth.

2. No Joins

NDJSON doesn't support joins. If you want "all tasks that failed for dispatches where T0 reassigned work," you can't express that as a single query.

Solution: Again, periodic materialization. When materializing state, VNX pre-computes common joins:

$ cat .vnx-data/state/receipts-index-latest.json | jq '.dispatch_to_failures'3. No Real-Time Aggregations

You can't subscribe to a live feed saying "alert me when total cost this hour exceeds $100." NDJSON is a file, not a message bus.

Solution: VNX runs a lightweight watcher process that tails the NDJSON file and publishes state changes to .vnx-data/state/realtime-metrics.json every 30 seconds. External processes (dashboards, monitoring tools) watch that file instead.

This is the External Watcher Pattern—and that's Part 4 of this series.

Honest Limitations

I need to be transparent about what NDJSON isn't good for:

Concurrent writes from multiple processes: NDJSON relies on atomic file appends. Linux guarantees this for writes under 4KB, but simultaneous writes from multiple processes can still interleave. VNX solves this by routing all receipt writes through a single receipt processor (running in T0) that appends them sequentially.

Complex transactions: If you need "update A and B atomically or fail both," NDJSON can't help. You need a database. VNX doesn't have that requirement because receipts are always append-only—no modifications.

Deletions and corrections: NDJSON doesn't delete. Corrections are new receipts. This is a feature for governance, but it means your ledger grows indefinitely. We mitigate by archiving files monthly.

Query optimization: A database can use indexes and query plans. NDJSON is always a linear scan. For 2,472 receipts (~ 5MB of JSON), this is fine. For 50 million receipts, you'd want a proper database or a data warehouse.

When You Should Actually Use a Database Instead

I'm not anti-database. NDJSON is perfect for immutable append-only audit trails. But if you need any of these, use Postgres:

- Mutable state: Receipts shouldn't be updated. If your governance events need updates, that's a smell. Fix your dispatch model.

- Complex joins: If you're frequently correlating data across multiple dimensions, a relational database wins.

- Concurrent writers: Multiple processes writing simultaneously to the same ledger need database transactions.

- Real-time analytics: If you need sub-second aggregations on billions of rows, a data warehouse (Redshift, BigQuery) is the right tool.

- Retention policies: If you need to automatically delete data older than 90 days, databases have that built-in. NDJSON requires cron jobs.

For VNX, NDJSON is the right call because:

- Write pattern: Append-only, one writer (T0 receipt processor)

- Read pattern: Sequential scans, batch queries, occasionally recent snapshots

- Failure mode: Better to lose a network connection than a database transaction

- Debugging: I need to SSH to the server and read the ledger directly

The Bigger Picture: Why This Matters for AI Agent Governance

Here's what I've learned from 2,472 dispatches: in AI systems, the audit trail IS the system.

When Claude running in terminal T1 hallucinates and generates code that breaks tests in terminal T2, I need to see:

- Exactly what Claude was asked

- Exactly what Claude produced

- The timestamp of that production

- The cost incurred

- Whether T3 caught the problem

- Whether the correction was successful

If any of these records are missing, lost, or updated in place, I've lost observability. I can't debug. I can't improve. I can't build trust.

A database with UPDATE and DELETE permissions creates ambiguity. "Was this record always this value, or was it changed?" A file where every event is appended in order gives me a complete, chronological truth about what my system did.

That's why I chose NDJSON. Not because it's trendy. Not because "flat files are simpler." But because immutability and observability are non-negotiable for multi-agent AI systems.

This post is part of the Glass Box Governance series.

Previous: The Cascade of Doom: When AI Agents Hallucinate in Chains — What happens when one hallucinated import triggers three agents to build a feature that should never exist? Next: One Terminal to Rule Them All: Multi-Model AI Orchestration — How do you coordinate Claude, Codex, and Gemini from a single dashboard without them knowing about each other?

📚 Glass Box Governance series

- One Terminal to Rule Them All: How I Orchestrate Claude, Codex, and Gemini Without Them Knowing About Each Other

- Receipts, Not Chat Logs: What 2,472 AI Agent Dispatches Taught Me About Governance

- The Cascade of Doom: When AI Agents Hallucinate in Chains

- Why I Chose NDJSON Over Postgres for My AI Agent Audit Trail ← you are here

- Claude Agent Teams vs. Building Your Own: What Anthropic Solved (And What They Left Out)

- The External Watcher Pattern: How I Observe AI Agents Without Trusting Their Self-Reports

- Why Architecture Beats Models: Lessons from 2400+ AI Agent Dispatches

- Async Quality Gates: Why AI Agents Don't Get to Decide When They're Done

- From Human-in-the-Loop to Human-on-the-Loop: A Production Graduation Path

- Traceability as Architecture: Designing AI Systems Where Every Decision Has a Receipt

- Decision-Making Architecture: Why Autonomous Agents Need Governance, Not Just Instructions

- Context Rotation at Scale: How VNX Keeps AI Agents Honest After 10,000 Dispatches

- Autonomous Agent Patterns: 5 Production-Tested Approaches for Agents That Run Without You

- Governance Scoring: How to Measure Whether Your AI Agent Deserves More Autonomy

Vincent van Deth

AI Strategy & Architecture

I build production systems with AI — and I've spent the last six months figuring out what it actually takes to run them safely at scale.

My focus is AI Strategy & Architecture: designing multi-agent workflows, building governance infrastructure, and helping organisations move from AI experiments to auditable, production-grade systems. I'm the creator of VNX, an open-source governance layer for multi-agent AI that enforces human approval gates, append-only audit trails, and evidence-based task closure.

Based in the Netherlands. I write about what I build — including the failures.